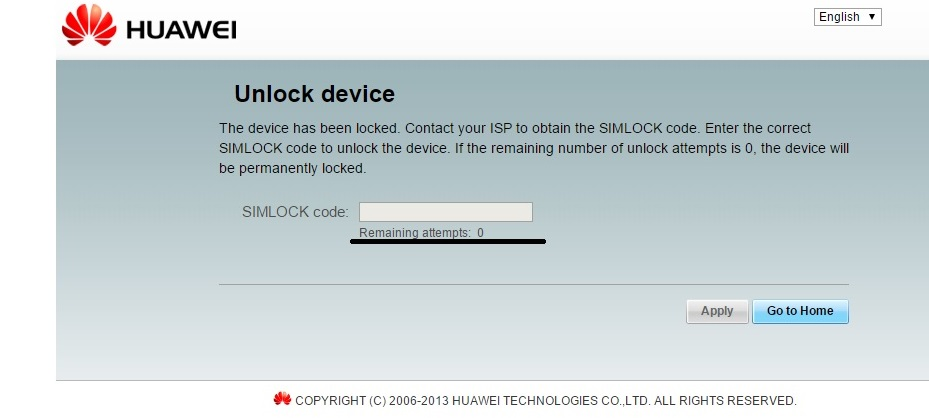

Lines 85-86: we create our image_results variable (85) to hold all of our EXIF data results and the image_hashes variable (86) to keep track of all of the unique hashes for the images we download.Line 83: we define our download_images function that takes in our big list of image URLs and the original URL we are interested in.Lines 72-74: if we don’t already have the image URL (72) we print out a message (73) and then add the image URL to our list of all images found (74).Īlright! Now that we have extracted all of the image URLs that we can, we need to download them and process them for EXIF data.Lines 63-70: we walk over the list of IMG tags found (64) and we build URLs (67-70) to the images that we can use later to retrieve the images themselves.This will produce a list of all IMG tags discovered in the HTML. The parsing is handled by using the findAll function (60) and passing in the img tag. Lines 59-60: now that we have the HTML we hand it off to BeautifulSoup (59) so that we can begin parsing the HTML for image tags.We print out a little helper message (53) and then we retrieve the HTML using the fetch function (56).

Lines 48-56: we walk through the list of assets (48), and then use the get_archive_url function (51) to hand us a useable URL.Line 43: we setup our get_image_paths function to receive the Pack object.Now crack open a new Python file, call it waybackimages.py (download the source here) and start pounding out (use both hands) the following: Pip install bs4 requests pandas pyexifinfo waybackpackĪlright let’s get down to it shall we? Coding It Up Now we are ready to install the various Python libraries that we need: Installing The Necessary Python Libraries Don’t know how to do this? Google will help. Make sure that C:\Python27 is in your Path.Save it to your C:\Python27 directory (you DO have Python installed right?) Download the ExifTool binary from here.Mac OSX users can use Phil’s installer here.įor you folks on Windows you will have to do the following: On Ubuntu based Linux you can do the following: This post involves a few moving parts, so let’s get this boring stuff out of the way first. The goal is for us to pull down all images for a particular URL on the Wayback Machine, extract any EXIF data and then output all of the information into a spreadsheet that we can then go and review.

This little beauty is the gold standard when it comes to extracting EXIF information from photos and is trusted the world over. The second tool is ExifTool, by Phil Harvey.

While you can use waybackpack on the commandline as a standalone tool, in this blog post we are going to simply import it and leverage pieces of it to interact with the Wayback Machine. The first is a Python module written by Jeremy Singer-Vine called waybackpack. We are going to leverage a couple of great tools to make this magic happen. Of course I was not going to do this manually, so I thought it was a perfect opportunity to build out a new tool to do it for me. One of the major sources of information for the investigation was The Wayback Machine, which is a popular resource for lots of investigations.įor this particular investigation there were a lot weird images strewn around as clues, and I wondered if it would be possible to retrieve those photos from the Wayback Machine and then examine them for EXIF data to see if we could find authorship details or other tasty nuggets of information. Friends of the Hunchly mailing list and myself embarked on a brief journey to see if we could root out any additional clues or, of course, solve the mystery. Not long ago I was intrigued by the Internet mystery (if you haven’t heard of it check out this podcast). This article was originally posted at the blog.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed